Hello everyone!

New release today, and it’s a big one!

I know, the version number might suggest it’s a small release, but I underestimated everything we had in this release when tagging the Git: ![]() It should have been 4.4

It should have been 4.4 ![]()

A new Gladys Plus Open API

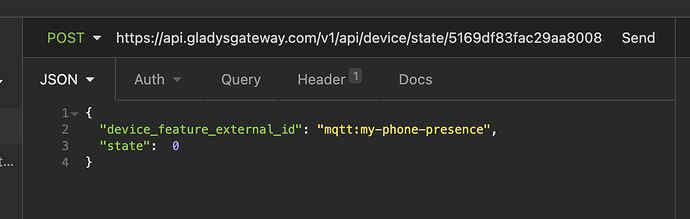

Many of you have asked for a way to send sensor values via the Gladys Plus API.

It’s now possible with a simple API call from anywhere in the world ![]()

I wrote a tutorial on the site:

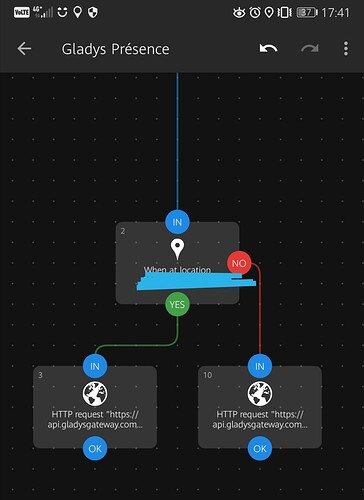

This allows you to send requests to Gladys via Tasker, or via iOS Shortcuts (I included a demo in the tutorial above), or from any script!

It’s very powerful ![]()

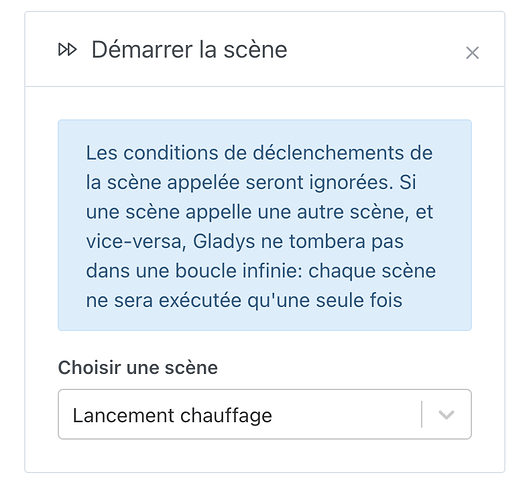

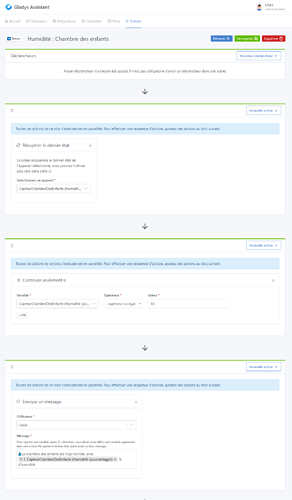

The ability to launch a scene within a scene

It is now possible to launch a scene within a scene.

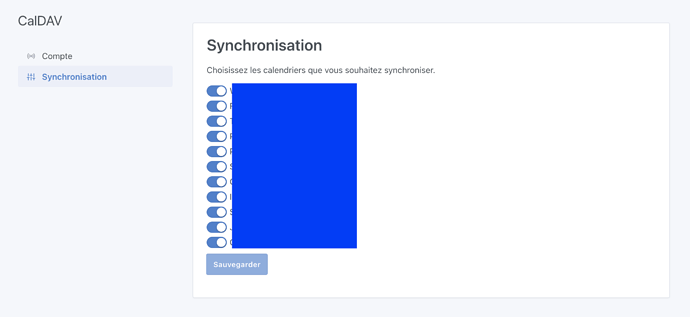

Disable synchronization of a Caldav calendar

It is now possible to disable the synchronization of a Caldav calendar, to be able to synchronize only the useful calendars ![]()

Many new Zigbee2mqtt devices

Here is the list of commits and devices added!

If you think any are missing, you need to create a GitHub issue ![]()

- feat(zigbee2mqtt): Add Lidl devices

#1186 - feat(zigbee2mqtt): Fix IKEA TRADFRI motion sensor

#1187 - feat(zigbee2mqtt): Add Adeo devices

#1169 - feat(zigbee2mqtt): Add Philips Hue mode 8718699673147l

#1170

Bug fixes

Many small bugs have been fixed, including:

- The problem of the chat not responding when asked for a camera image

- The bug of the weather box on the dashboard when it was coupled with the « room devices » box

The complete changelog is available on GitHub.

How to update?

If you installed Gladys with the official Raspbian image, your instances will update automatically in the coming hours. This can take up to 24 hours, no need to worry.

If you installed Gladys with Docker, make sure you are using Watchtower (See the documentation)

Congratulations to everyone who participated in this release!